Perfectly Aligning AI’s Values With Humanity’s Is Impossible – Maybe the best we can do is make “neurodiverse” systems that challenge each other

...

IEEE Spectrum: You and your colleagues have now shown that misalignment of AI systems is inevitable, because any AI system complex enough to display general intelligence will produce unpredictable behavior. Your proof rests on two famous sets of premises—Gödel’s incompleteness theorems, which found that every mathematical system will have statements that can never be proven, and Turing’s undecidability result for the halting problem, which found that some problems are inherently unsolvable.

Zenil: The conventional wisdom assumes misalignment is a bug that can eventually be removed with the right optimization strategy. Our results show that the problem of alignment is not simply a lack of better data, more compute, or better engineering, but a limit built into both formal systems and universal computation. What I am arguing is that for sufficiently general AI systems, some degree of misalignment is structural, so the task shifts from elimination to management.

IEEE Spectrum: Can you describe your strategy of managed misalignment?

Zenil: Once perfect alignment looked unattainable in principle, the next move was obvious—stop trying to perfect one agent and start designing the ecology around it. This is what it would take to achieve any degree of controllability, and controllability has to come from outside, given the intrinsic impossibility of controlling from the inside. You see similar strategies in biology and medicine, where robust results often come from interacting systems rather than a single master controller.

The simplest way to put it is this: Do not trust one supposedly perfect AI to govern everything. Instead, build a structured ecosystem of different agents with different “values” that monitor, challenge, and constrain one another, much like courts, auditors, and competing institutions do in human society. None of them is perfect on its own, but their managed interaction can make the whole arrangement safer than any single dominant model.

The main thing not to misunderstand is that managed misalignment does not mean giving up on safety or letting AI behave however it likes. It means replacing the fantasy of absolute control with a more realistic form of distributed control. In that sense, it is not less serious about safety, but more serious about what safety actually requires.

IEEE Spectrum: How did you test your strategy?

...

Zenil: This work is not anti-AI. It is anti-naivety about control.

See the full story here: https://spectrum.ieee.org/amp/ai-alignment-2676752963

How A.I. Is Transforming China’s Entertainment Industry

...

Until recently, making a hit microdrama — the soapy, short-form, made-for-mobile shows that have become wildly popular in China — meant hiring actors, renting sets and spending weeks filming and editing.

Now, some Chinese companies are churning them out for $30 a minute, with no cameras, no crew and no human performers.

That’s thanks to artificial intelligence. ...

Most A.I.-generated microdramas, which under Chinese law are required to be labeled as such, attract little attention. But some clips have racked up hundreds of millions of views, in a country where people are generally more optimistic about A.I. than in the West. The Chinese market for A.I. microdramas is expected to be worth more than $3 billion this year, according to Chinese state media, out of a more than $14 billion market for microdramas in general.

But recently, the surge has also set off an outcry in China. Actors say their work opportunities have dried up. Celebrities and ordinary people alike have threatened legal action after discovering their likenesses in A.I.-generated microdramas. (ByteDance has since introduced restrictions on using real people’s faces in Seedance.) ...

Mr. Li said he did not oppose the use of A.I. in entertainment but thought the industry was applying it in the wrong way.

“They’re still just imitating humans or trying to make things more humanlike,” he said. “They should be trying to unleash more imagination, taking a more unconventional route.”

He continued: “After all, our fundamental value as humans is in our ability to imagine.” ...

Part of the reason he had turned to the shorter format — microdrama episodes are usually one or two minutes long — was for the quick returns. ...

He hopes to make projects that combine A.I. with live action. He is working on a project that he said is similar to “Stuart Little,” the film that featured an animated mouse alongside real actors.

“If we could feel the warmth of a real performance, and also see the power of A.I. technology, I think that would be great,” he said. ...

Some of Mr. Hou’s creative employees have backgrounds in filmmaking, but others are simply people “who are obsessed with A.I.,” he said. ...

“This work isn’t exactly traditional screenwriting. Part of it requires translating into a language that A.I. can understand,” he said. “People who don’t have a traditional directing or screenwriting background might actually be better at it.”

Producing a 100-minute animated series takes about one month and three employees. Realistic ones take around five people, because it is more labor-intensive to create images that are good enough. ...

And people would gradually find ways to adapt to the employment pains, too, he said. He himself had previously worked for big tech companies in Beijing before he was forced out by cutbacks and pivoted to A.I. filmmaking.

“The impact on employment — there definitely will be an impact,” Mr. Hou said. “But for individuals, what can you do? You can only embrace this new era and think about how to adapt.” ...

See the full story here: https://www.nytimes.com/2026/05/03/world/asia/china-microdrama-ai-backlash.html

Silicon Valley Is Bracing for a Permanent Underclass

...

“Whenever someone wrote a paper which talked about some negative aspect of A.I., he would say, ‘We’re not going to release something about a problem until we have a solution for it,’” said an employee who worked with Mr. Lehane, and who spoke on the condition of anonymity to discuss internal deliberations. Mr. Lehane characterized his approach differently: He wanted the economists on OpenAI’s global affairs team to “inform smart public-policy making,” not conduct “niche” academic research.

...

And what if we don’t act? What if we “let technology rip”? What if millions of people do lose their jobs to A.I., and nobody puts up the money or policy solutions to help them? In March, the Palantir chief executive, Alex Karp, spoke on a panel with the Teamsters president, Sean O’Brien. “The biggest challenge to A.I. in this country is political unrest,” Mr. Karp said. “If I were sitting here in private with my peers, I’d be telling them the country could blow up politically and none of us are going to make any money when the country blows up.”

See the full story here: https://www.nytimes.com/2026/04/30/opinion/ai-labor-work-force-silicon-valley.html

Canadian Feds reveal 6 pillars for long-touted, repeatedly delayed national AI strategy

...

The federal government said it will achieve those objectives through six pillars:

- Protecting Canadians and safeguarding our democracy.

- Empowering Canadians

- Powering AI adoption for shared prosperity.

- Building the Canadian sovereign AI foundation.

- Scaling Canadian champions.

- Building trusted partnerships and global alliances.

...

See the full story here: https://www.cbc.ca/news/politics/ai-strategy-pillars-evan-solomon-9.7180418

OpenAI Publishes 5 Principles For Its AGI Push

...

The Five Principles Announced This Week

The company says it will resist concentrated power and wants major AI decisions shaped through democratic processes, not only by AI labs, as part of its first principle of democratization. ...

The second principle, empowerment, gives users broad latitude. OpenAI says people should have meaningful control over how they use AI. Yet the same section ties that freedom to a duty to reduce catastrophic harm, local harm and social damage. ...

The third principle, universal prosperity, links AI access to massive infrastructure buildout and lower compute costs. ...

The fourth principle, resilience, moves the company closer to the language of national security. OpenAI points to biological risk, cybersecurity and critical infrastructure. It says no single lab can secure the future alone, and it wants to put in oversight to ensure that any society-wide harms can be detected early and mitigated easily. ...

The fifth principle, adaptability, is the most revealing. OpenAI says it will change course as it learns and that some periods may require placing resilience ahead of empowerment. This means that the closer AI gets to AGI capabilities, the more access may become conditional.

...

See the full story here: https://www.forbes.com/sites/ronschmelzer/2026/04/27/openai-publishes-five-principles-for-its-agi-push/

OpenAI just changed its principles. Here’s what’s changing

...

Both versions of the company’s principles say that OpenAI’s mission is to guarantee this technology “benefits all of humanity,” but the 2018 version explicitly mentions building it safely and beneficially.

“Our primary fiduciary duty is to humanity,” the document reads. “We anticipate needing to marshal substantial resources to fulfil our mission, but will always diligently act to minimise conflicts of interest among our employees and stakeholders that could compromise broad benefit.”

The 2026 version, however, said it needs to continue to build safe systems, but that society needs to contend with “each successive level of AI capability, understand it, integrate it, and figure out the best path forward together.” ...

The way forward, as CEO and cofounder Sam Altman sees it in 2026, is to democratise AI at all levels by giving everyone access to it and resisting the idea that the technology could “consolidate power in the hands of the few”. ... [philNote: "resistance is useless."]

AGI has a “ring of power” to it that “makes people do crazy things,” Altman wrote. To fight back, he said the only solution is to “orient towards sharing the technology with people broadly, and for no one to have the ring.” ...

See the full story here: https://ca.news.yahoo.com/openai-just-changed-principals-changing-112531234.html

Why Cohere is merging with Aleph Alpha

Canadian AI startup Cohere is taking over Germany-based Aleph Alpha, with the blessing of their governments, in a bid to offer a sovereign alternative to enterprises in an AI landscape dominated by American players. “Sovereign AI” refers to systems where companies and governments retain full control over their own data — rather than routing it through U.S. tech giants like Microsoft or Google. ...

While Cohere reported $240 million in annual recurring revenue in 2025, Aleph Alpha had previously generated little revenue and significant losses. But investors are betting that teaming up will improve their odds against much larger rivals. ...

The new entity plans to target highly-regulated industries — including defense, energy, finance, healthcare, manufacturing and telecommunications— as well as the public sector. ...

According to Gomez, “Cohere will become a Canadian-German company.” But that promise could be harder to keep if the company goes public — putting ownership in the hands of global shareholders with no particular allegiance to either country.

See the full story here; https://techcrunch.com/2026/04/25/why-cohere-is-merging-with-aleph-alpha/

The core thesis: this isn’t just a merger—it’s a geopolitical AI move

The merger between Cohere and Aleph Alpha is fundamentally about “sovereign AI”—not just scaling models.

- Governments (especially in Europe) want AI systems they control

- Enterprises in regulated sectors want data residency + compliance guarantees

- There’s growing resistance to dependence on U.S. hyperscalers

...

See the full story here: https://chatgpt.com/?utm_source=Daily+Email+2025&utm_campaign=dd635137fe-EMAIL_CAMPAIGN_2026_04_27_01_24&utm_medium=email&utm_term=0_-dd635137fe-303719286&mc_cid=dd635137fe&mc_eid=116e9f337b&model=gpt-5-3

How Anthropic Thinks About Agents, Workflows, and Tasks

...

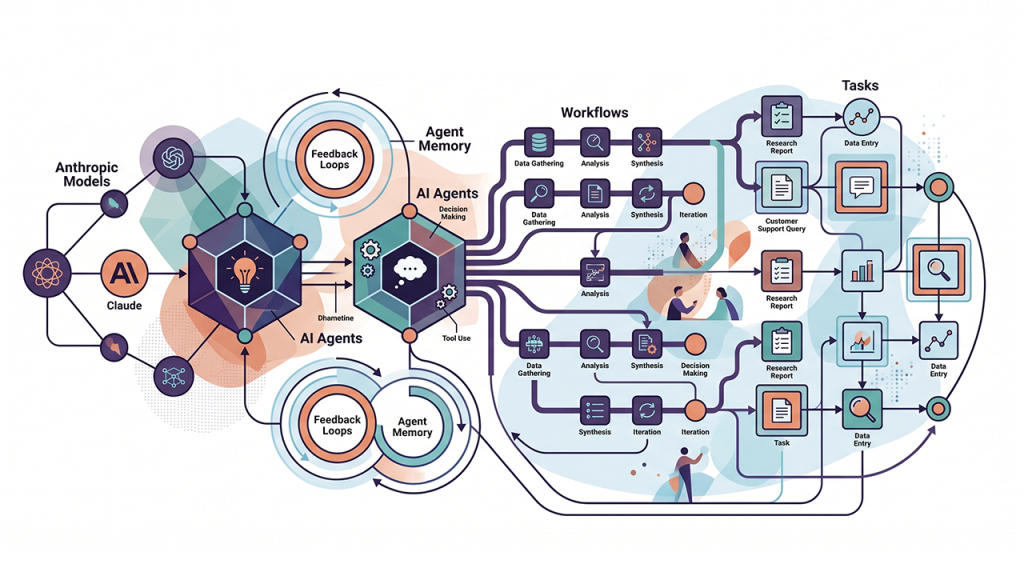

Task – A single model call: Summarize this. Classify that. Extract these fields. Two years ago this felt like magic. Today it is table stakes. The cost is predictable and the failure modes are bounded.

Workflow – Multiple model calls in a predefined control flow. You decide the steps, the model fills them in. Route the customer email to the right queue, draft a response, check the response against policy, then send. The control flow is yours. The intelligence happens at each node.

Agent – A model using tools in a loop, deciding its own trajectory. You give it a goal, a set of tools, and a system prompt. It decides what to do next based on what just happened. The control flow belongs to the model. ...

China blocks Meta from acquiring AI startup Manus

...

In a one-line statement, China's National Development and Reform Commission, the country's top planning agency, said it was prohibiting a foreign acquisition of Manus and had required all the parties to withdraw from the deal. It did not specifically name Meta Platforms, which owns Facebook and Instagram.

The decision was made by the commission's Office of the Working Mechanism for Security Review of Foreign Investment in accordance with Chinese laws and regulations, the statement said. ...

Meta announced in December that it was acquiring Manus, which has Chinese roots but is based in Singapore, in a rare case of a major U.S. tech group buying an AI company with strong links to China. Its deal with Manus, whose "general-purpose" AI agent can perform multistep complex work autonomously, was expected to help expand AI offerings across Meta's platforms. ...

See the full story here: https://www.npr.org/2026/04/27/g-s1-118892/china-blocks-meta-from-acquiring-ai-startup-manus

The Sphere Looked Like a Disaster. It’s Become a Huge Hit Instead.

...

Sphere has discovered an unexpected hit formula: the performance space that is the most cutting-edge technically works with bands whose frontmen are old enough to have decades of greatest hits that everyone knows by heart. And it can fill the arena with fans the age of its stars—U2’s Bono (65)—as well as vintage-curious Gen Z.

It stacks the calendar with established artists for residencies that can last days, weeks or months—pairing safe, broadly appealing musical acts with spectacular visual flair in shows that take months to produce: U2, Eagles, Kenny Chesney and Backstreet Boys, among others. During the day before a concert, it can charge $200 a ticket for “The Wizard of Oz,” remastered so audiences feel like they’re walking with Dorothy on the yellow brick road. ...

See the full story here: https://www.wsj.com/business/media/sphere-vegas-dolan-disaster-hit-fa0e6b17

Pages

- About Philip Lelyveld

- Mark and Addie Lelyveld Biographies

- Presentations and articles

- Trustworthy AI – A Market-Driven approach

- Tufts Alumni Bio