18Nov/21Off

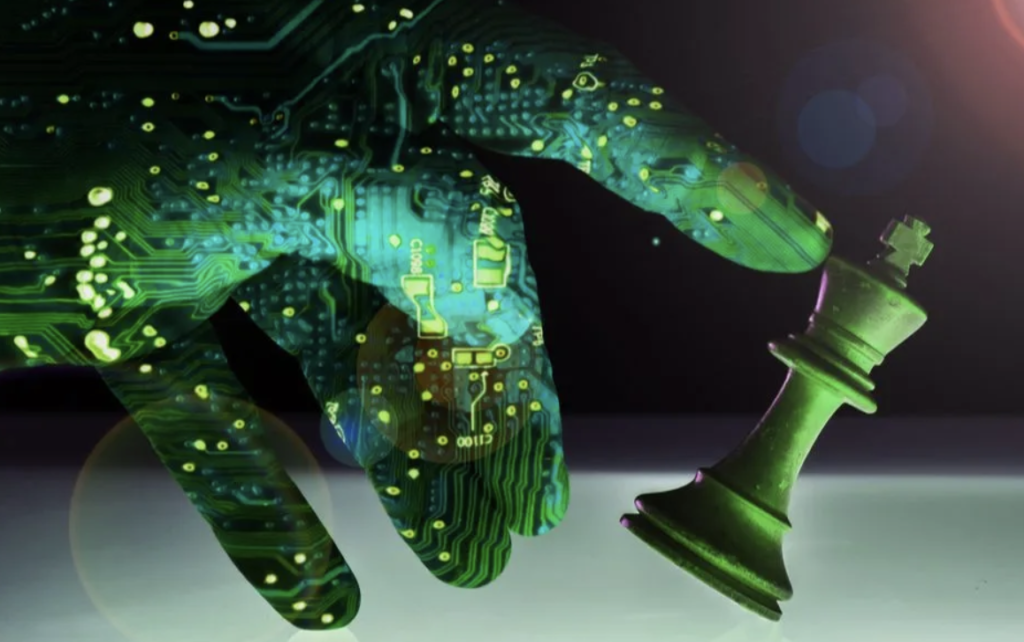

In the push for development, is the U.S. prepared to regulate AI?

There are three levels of unintended outcomes that AI regulators and practitioners must monitor:

- AI systems: They won’t always consistently deliver the correct results every single time. We need to design human oversight into AI systems, often referred to as “human-in-the-loop,” to create a system of checks and balances. Regulations and standards in applications, such as autonomous vehicles, are needed for safety, as they can cause direct and immediate harm.

- The business world: AI is creating a different way for companies to compete, and the increasing access to data greatly changes the market dynamic. We need regulations that are similar to antitrust concerns for businesses utilizing AI technology and collecting data.

- Societal impact: In the future, there could be job displacement of human workers. However, strong regulations and restrictions of AI applications are not appropriate or adequate remedies for potential job loss.

See the full story here: https://venturebeat.com/2021/11/17/in-the-push-for-development-is-the-u-s-prepared-to-regulate-ai/

Filed under: Non-3D stories

Comments Off

Pages

- About Philip Lelyveld

- Mark and Addie Lelyveld Biographies

- Presentations and articles

- Trustworthy AI – A Market-Driven approach

- Tufts Alumni Bio

More posts

If your company is an ETC member, you can log in and see more news posts at www.etcentric.org