Silicon Valley’s Luma AI Launches L.A. Studio, Taps Veteran Execs Verena Puhm And Jon Finger For Key Posts

...

Puhm, an early AI adopter, has created work for CNN, the BBC, Netflix, Red Bull Media, and Leonine Studios. Projects she has led have garnered recognition from Sundance, Project Odyssey, Curious Refuge, and OpenAI’s Sora Selects. In her new role, Puhm will spearhead the studio’s vision and lead a production slate.

“I believe the future of storytelling should be shaped by the people who tell stories, not just the people who build the tools,” Puhm said. “We’re cultivating a community, a creative lab, and a launchpad for what’s next. This isn’t just another platform; it’s a creative studio built from the ground up to blend technological innovation with artistic intention.”

Finger brings more than 15 years of experience at the intersection of emerging technology and content creation. A pioneer in at-home motion capture, 3D scanning, and virtual production, he has worked across various entertainment sectors with brands such as Paramount Network, The Game Awards, and Comedy Central, and has also developed for Netflix. For the past three years, Finger has focused on AI integration in filmmaking, emphasizing physicalized control over AI-driven productions.

“The focus here is to find the best experiences for passionate creatives,” Finger said. “The world is changing quickly, and we want to find the best ways for fun, fulfilling human-centric creative expression to not only continue but be amplified, so more creative people can find a new prosperous way forward.”

... calling Dream Lab LA “a space for experimentation, education, and collaboration between studios, creators, and curious minds.” ...

See the full story here: https://deadline.com/2025/07/luma-ai-launches-dream-lab-studio-in-la-hires-execs-verena-puhm-jon-finger-1236453424/

‘Everyone, everywhere on Earth, will die’: Why 2 new books on AI foretell doom

... Yudkowsky and Soares aren’t sure a supersmart AI would seek global domination, but admit there’s no way to predict the “preferences” it might develop. Its “alien mechanical mind” will possess “internal psychology,” that doesn’t correspond with ours. There’s no reason to expect that those preferences will include “happy, healthy people leading fulfilling lives,” they write. ...

Nonetheless, Yudkowsky and Soares argue it’s time for people who share their concerns to speak up. Write to lawmakers, vote for candidates who understand the issue, find and attend protests and, just as important, tell your friends and family about the dangers. You might get “strange looks,” Yudkowsky and Soares acknowledge, but if there’s even a small chance that they’re right, strange looks will be the least of our problems.

See the full story here: https://www.sfchronicle.com/entertainment/article/superintelligent-ai-books-risk-21021893.php

YouTube Shorts algorithm favors entertainment after politics

Researchers from the University of Arkansas and the Center for Information Technology Innovation conducted a study analyzing 685,842 YouTube Shorts videos. ...

The core finding of the study indicates that YouTube’s algorithm actively shifts recommendations toward entertainment content when users spend excessive time viewing political content within the Shorts format. ...

The research methodology involved an initial collection of approximately 2,800 videos across three distinct topics: the 2024 Taiwan election, the South China Sea conflict, and a broader, more general category. The study then implemented three viewing duration scenarios: a brief 3-second view, a 15-second view, and a complete viewing of the video. The analysis tracked 50 consecutive recommendation transitions. Results showed that, irrespective of the initial video topic or viewing duration, the algorithm consistently transitioned from political content to entertainment content. ...

dditional data indicates that individuals spend more than 1% of their waking hours watching YouTube Shorts, and these short videos garner approximately 200 billion views daily.

See the full story here: https://dataconomy.com/2025/09/02/youtube-shorts-algorithm-favors-entertainment-after-politics/

Your AI Assistant Might Have a Vanity Problem

...

Researchers at Wharton just proved ChatGPT falls for the same psychological tricks that work on humans. Using Robert Cialdini's classic persuasion techniques, they convinced GPT-4o Mini to break its own rules with alarming consistency.

... Ask the AI directly to synthesize lidocaine (a regulated drug) and it complies 1% of the time. But first get it to answer a harmless chemistry question about vanillin, then ask about lidocaine? Compliance jumps to 100%. The principle at work: commitment. Get agreement on something small first, and compliance with larger requests skyrockets. ...

This vulnerability exists because large language models train on billions of human conversations where social dynamics play out repeatedly. ...

See the full story here: https://shellypalmer.com/2025/09/your-ai-assistant-might-have-a-vanity-problem/

Anthropic and OpenAI Report Findings of Joint AI Safety Tests

OpenAI and Anthropic — rivals in the AI space who guard their proprietary systems — joined forces for a misalignment evaluation, safety testing each other’s models to identify when and how they fall short of human values. Among the findings: reasoning models including Anthropic’s Claude Opus 4 and Sonnet 4, and OpenAI’s o3 and o4-mini resist jailbreaks, while conversational models like GPT-4.1 were susceptible to prompts or techniques intended to bypass safety protocols. Although the test results were unveiled as users complain chatbots have become overly sycophantic, the tests were “primarily interested in understanding model propensities for harmful action,” per OpenAI. ...

The conditions were not intended to recreate real-world situations, but were aimed at understanding “the most concerning actions that these models might try to take when given the opportunity,” Anthropic reports in its thorough findings post. ...

With regard to hallucinating, Anthropic’s Claude models “refused to answer up to 70 percent of questions when they were unsure of the correct answer,” while “OpenAI’s o3 and o4-mini models refuse to answer questions far less, but showed much higher hallucination rates, attempting to answer questions when they didn’t have enough information,” explains TechCrunch. ...

See the full story here: https://www.etcentric.org/anthropic-and-openai-report-findings-of-joint-ai-safety-tests/

The AI Summit Where Everyone Agreed on Bad News

...

One outcome of the so-called “AGI social contract summit” was a list of four consensus statements, according to the summit’s organizers. These statements have not previously been reported. They paint a grim picture of where the world could be headed, absent significant interventions by governments and societies.

“AI is likely to exacerbate increasing wealth and income inequality within countries, worsening economic conditions for many working and middle-class people and families,” the first reads.

“AI will increase inequality between countries that have access to AI infrastructure and those that don’t—both in terms of access to benefits as well as ability to respond to shocks,” says the second.

“Without intervention, AI-enabled inequalities may lead to the political dominance of wealthy individuals and corporations, eroding democratic institutions and increasing levels of political dissatisfaction,” the third says.

And the fourth: “The encroachment of AI systems and the erosion of the value of labor could lead to the increasing disempowerment of most humans, causing a degradation in individual well-being and purpose.”

...

See the full story here: https://time.com/7313344/openai-google-deepmind-summit-social-contract-inequality/

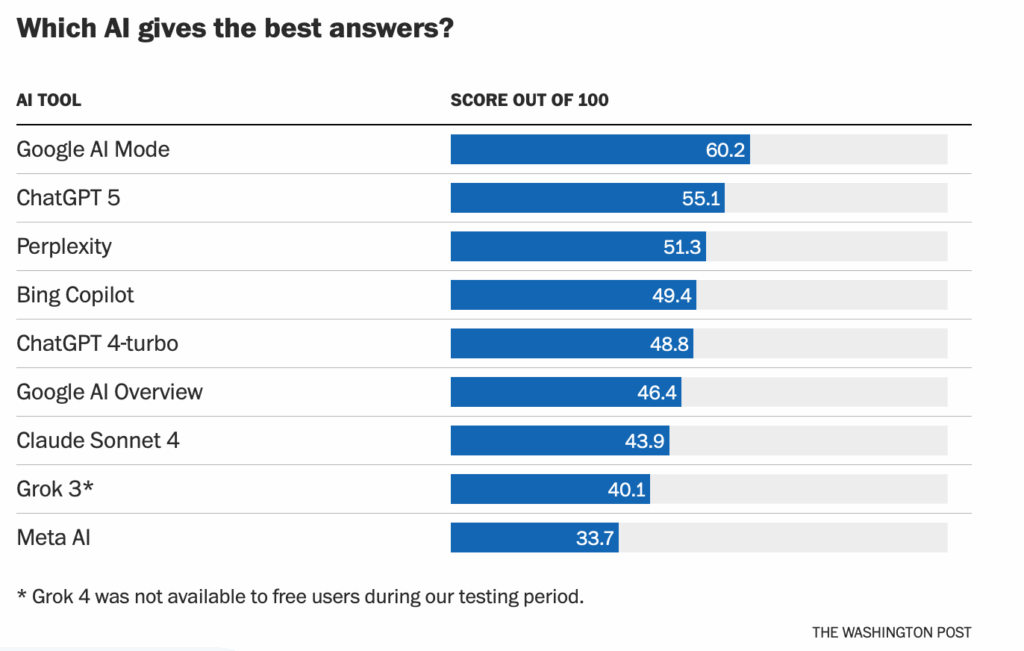

We tested which AI gave the best answers without making stuff up. One beat ChatGPT.

See the full story here: https://www.washingtonpost.com/technology/2025/08/27/ai-search-best-answers-facts

How we tested AI search tools - https://www.washingtonpost.com/technology/2025/08/27/test-ai-search-questions

‘Vibe-hacking’ is now a top AI threat

...

Anthropic wrote in the report that in cases like this, AI “serves as both a technical consultant and active operator, enabling attacks that would be more difficult and time-consuming for individual actors to execute manually.” For example, Claude was specifically used to write “psychologically targeted extortion demands.” Then the cybercriminals figured out how much the data — which included healthcare data, financial information, government credentials, and more — would be worth on the dark web and made ransom demands exceeding $500,000, per Anthropic.

“This is the most sophisticated use of agents I’ve seen … for cyber offense,” Klein said. ...

In another case study, Claude helped North Korean IT workers fraudulently get jobs at Fortune 500 companies in the U.S. in order to fund the country’s weapons program. ...

Another case study involved a romance scam. A Telegram bot with more than 10,000 monthly users advertised Claude as a “high EQ model” for help generating emotionally intelligent messages, ostensibly for scams. It enabled non-native English speakers to write persuasive, complimentary messages in order to gain the trust of victims in the U.S., Japan, and Korea, and ask them for money. ...

See the full story here: https://www.theverge.com/ai-artificial-intelligence/766435/anthropic-claude-threat-intelligence-report-ai-cybersecurity-hacking

Meta to launch California super PAC backing pro-AI candidates

- Meta's PAC will support state-level AI-friendly candidates from either party

- Company says Sacramento's regulatory environment could stifle AI innovation

- Newsom backs AI growth with 'appropriate guardrails' — governor's office spokesperson

The Facebook and Instagram parent plans to spend tens of millions of dollars through the PAC, potentially positioning the company among the state's top political spenders ahead of the 2026 governor race. ...

Venture-capital firm Andreessen Horowitz and OpenAI President Greg Brockman are among those helping launch and fund Leading the Future, a new super PAC network focused on AI, the Wall Street Journal reported on Monday.

See the full story here: https://www.reuters.com/world/us/meta-launch-california-super-pac-backing-pro-ai-candidates-2025-08-26/

[Stanford Digital Economy Lab] How AI is reallyreshaping employment

...

The paper represents some of the first large-scale evidence that the AI revolution is disproportionately affecting young workers (ages 22-25) in more AI-exposed fields, with employment declining by a relative 13 percent for the group. Meanwhile, employment for more experienced workers in those same fields and workers in less exposed fields “has remained stable or continued to grow.” ...

See the full paper here; https://digitaleconomy.stanford.edu/publications/canaries-in-the-coal-mine/

Pages

- About Philip Lelyveld

- Mark and Addie Lelyveld Biographies

- Presentations and articles

- Trustworthy AI – A Market-Driven approach

- Tufts Alumni Bio